This study material has been compiled from a lecture audio transcript and a summary document.

📚 Understanding Syntax Analysis and Parsing in Language Implementation

🎯 Introduction to Language Processing

Syntax analysis and parsing are fundamental concepts in the implementation of programming languages. They form the bedrock of how source code is transformed into executable instructions, making them crucial for anyone interested in the inner workings of compilers and interpreters. This guide will explore the essential components and methodologies involved in processing programming languages, focusing on lexical analysis and various parsing techniques, particularly the prominent LR parsers.

1️⃣ Lexical Analysis: The First Step 📝

Before a program's structure can be fully understood, it must first be broken down into its most basic components. This initial phase is handled by the lexical analyzer.

✅ Role of the Lexical Analyzer

- Pattern Matcher: The lexical analyzer acts as a pattern matcher, scanning the input program.

- Token Isolation: Its primary function is to isolate small-scale parts of the program, known as tokens.

- Input for Parsing: These tokens form a structured stream that serves as the input for the subsequent parsing phase.

📚 What are Tokens?

Tokens are the basic building blocks of a language. They represent meaningful units such as:

- Keywords: Reserved words (e.g.,

int,if,while). - Identifiers: Names given to variables, functions, etc. (e.g.,

x,myFunction). - Operators: Symbols performing operations (e.g.,

=,+,-). - Literals: Constant values (e.g.,

10,"hello").

💡 Example: Tokenization

Consider the statement: int x = 10;

The lexical analyzer would identify:

intas a keywordxas an identifier=as an operator10as a literal

This process effectively breaks down raw source code into well-defined units, preparing it for deeper syntactic scrutiny.

2️⃣ Parsing Fundamentals: Building Structure 🌳

Following lexical analysis, the parsing phase begins. This stage is critical for understanding the grammatical structure of the program.

✅ Primary Objectives of a Parser

- Detect Syntax Errors: Ensure the program adheres to the language's grammatical rules.

- Produce a Parse Tree: Generate a hierarchical representation of the program's syntactic structure.

📚 The Parse Tree

A parse tree visually depicts how expressions are grouped and how statements are structured according to the grammar. It is vital for subsequent phases like semantic analysis and code generation.

📊 Types of Parsers

Parsers are broadly categorized into two main types based on how they construct the parse tree:

- Top-Down Parsers: Start from the grammar's start symbol and try to derive the input string.

- Bottom-Up Parsers: Start from the input symbols and try to reduce them to the grammar's start symbol.

3️⃣ Top-Down Parsing: From Root to Leaves ⬇️

Top-down parsers attempt to build the parse tree from the root (start symbol) down to the leaves (input tokens).

📚 Recursive-Descent Parsers (LL Parsers)

- Example: A notable example of a top-down parser is the recursive-descent parser.

- Classification: These are classified as LL parsers.

- L1 (Left-to-right scan): They process the input from left to right.

- L2 (Leftmost derivation): They construct a leftmost derivation of the input.

- Mechanism: Recursive-descent parsers typically involve a set of recursive procedures, where each procedure corresponds to a non-terminal in the grammar. They attempt to match the input to the grammar rules by expanding non-terminals from the top of the parse tree downwards.

4️⃣ Bottom-Up Parsing: From Leaves to Root ⬆️

Bottom-up parsers build the parse tree from the input symbols (leaves) up to the start symbol (root).

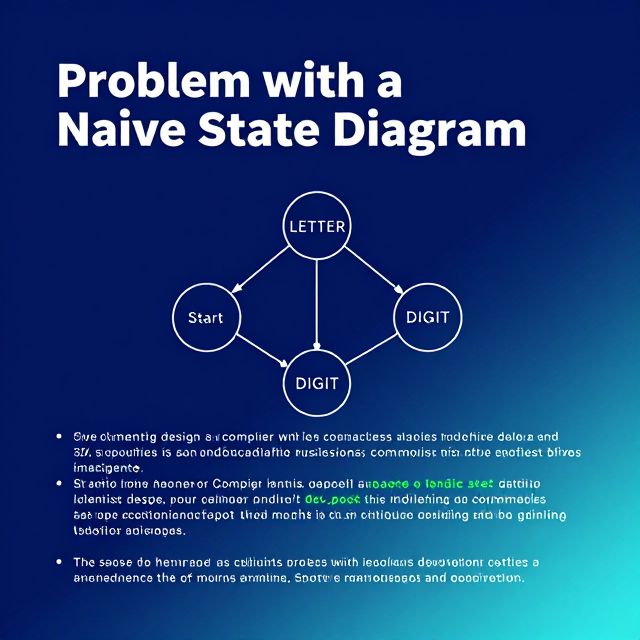

⚠️ The Parsing Problem for Bottom-Up Parsers

The core challenge for bottom-up parsers is to identify the correct substring of the current sentential form that corresponds to the right-hand side of a production rule. This specific substring is known as a handle. The parser must correctly locate this handle and reduce it to its corresponding non-terminal.

💡 Example: Handle Reduction

Consider a grammar rule: Expression -> Number + Number

If the parser sees the input sequence 5 + 3, it needs to:

- Identify

5 + 3as the handle. - Reduce this handle to

Expression.

📚 LR Parsers: The Most Common Approach

The LR family of shift-reduce parsers is the most common and widely used bottom-up parsing approach in compiler design.

✅ Key Characteristics of LR Parsers

- Shift-Reduce Mechanism: They operate by shifting input tokens onto a stack and then reducing sequences of symbols on the stack to non-terminals according to grammar rules.

- Rightmost Derivation in Reverse: A key characteristic of an LR parser is its ability to trace a rightmost derivation in reverse.

- Instead of starting from the grammar's start symbol and deriving the rightmost non-terminal at each step, an LR parser begins with the input string.

- It then works backward, identifying the production rules that were applied last in a rightmost derivation sequence.

- Efficiency and Power: This reverse tracing allows for efficient and powerful syntax analysis. LR parsers can handle a large class of grammars and are known for their ability to detect errors early in the parsing process, making them a cornerstone in compiler design.