📚 Chapter 4: Lexical and Syntax Analysis - Study Guide

Source Information: This study material has been compiled from a lecture audio transcript and copy-pasted text provided by the user.

🎯 Introduction to Language Analysis

Language implementation systems, whether they rely on compilation, interpretation, or hybrid approaches (like Just-In-Time (JIT) compilation), all share a fundamental initial step: the thorough analysis of source code. Before any program can be executed, the system must fully comprehend its structure as written by the programmer. This foundational understanding is almost universally based on a formal description of the source language's syntax, most commonly utilizing Backus-Naur Form (BNF).

The process of analyzing source code involves two primary phases:

- Lexical Analysis (Scanning): The initial phase where raw source code is broken down into basic, meaningful units.

- Syntax Analysis (Parsing): The subsequent phase where these units are checked against the language's grammar rules to ensure structural correctness.

The ultimate output of this analytical process is typically a Parse Tree, which visually represents the hierarchical structure of the program, illustrating how different parts of the code are organized according to the language's defined grammar.

🔍 The Two Phases of Language Processing

The syntax analysis step of a language processor is typically divided into two main components: the Lexical Analyzer and the Syntax Analyzer.

1. Lexical Analysis (Scanning) 📝

The Lexical Analyzer, often called the Scanner, performs the low-level analysis of the source code.

✅ Role:

- Processes the raw sequence of characters directly from the source program.

- Groups these characters into meaningful units called lexemes.

- Assigns each lexeme to a specific token category.

📚 Tokens: Tokens are the basic building blocks of a program. They represent categories of lexemes.

- Examples of Token Categories:

identifiers(e.g.,myVariable,calculateSum)keywords(e.g.,if,while,for,int)numeric literals(e.g.,123,3.14)operators(e.g.,+,-,=,*)delimiters(e.g.,;,(,))

💡 Implementation:

- In most language processors, the lexical analyzer is implemented as a dedicated function or module.

- The parser calls the lexical analyzer whenever it needs the next token from the source program.

- Steps for each call:

- Reads characters from the input program.

- Groups them into a lexeme.

- Determines the token category of the lexeme.

- Returns both the token and the lexeme to the parser.

- This means the parser does not directly process individual characters; instead, it operates on the stream of tokens provided by the lexical analyzer.

2. Syntax Analysis (Parsing) 🌳

The Syntax Analyzer, also known as the Parser, performs the high-level analysis of the program structure.

✅ Role:

- Works directly with the tokens produced by the lexical analyzer.

- Determines whether the sequence of tokens forms a syntactically valid program according to the language's grammar rules.

- Analyzes larger program structures.

📚 Structures Analyzed:

Expressions(e.g.,a + b * c)Statements(e.g.,x = y;,if (condition) { ... })Program Blocks(e.g.,{ ... })Complete Program Units(e.g., functions, classes)

📐 Formal Description of Syntax: Backus-Naur Form (BNF)

Nearly all syntax analyzers are based on a formal description of the programming language syntax. The most common formal notation is Backus-Naur Form (BNF).

✅ Purpose of BNF:

- BNF is used to describe context-free grammars (CFG), which define the structural rules of programs.

- It provides a precise and unambiguous way to specify the syntax of a programming language.

📝 BNF Rule Example:

Consider the rule: <assignment> → <identifier> = <expression>

- This rule states that an assignment statement (

<assignment>) must consist of:- An

identifier(e.g., a variable name) - Followed by the assignment operator

= - Followed by an

expression(e.g., a value or calculation)

- An

- Parsers use such BNF rules to verify that programs adhere to the language's syntax.

🧠 Theoretical Models for Analysis

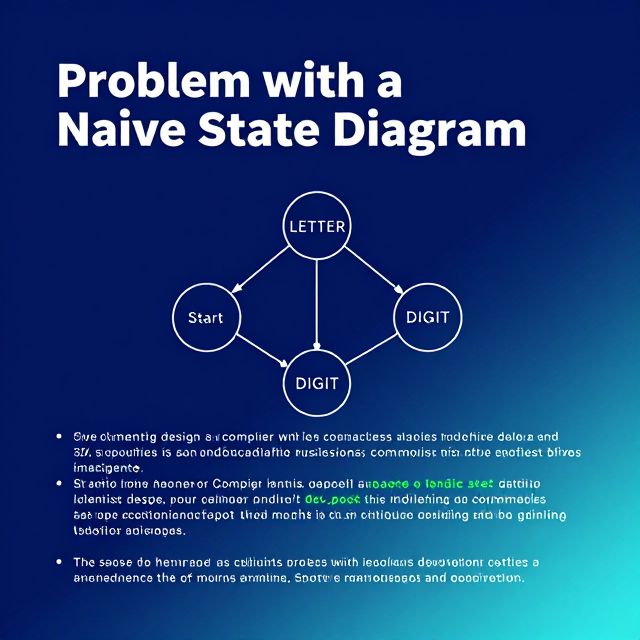

From a theoretical perspective, both lexical and syntax analysis can be modeled using specific computational machines and grammars.

-

Lexical Analyzer (Scanner):

- Can be modeled as a finite automaton.

- The patterns it recognizes (for tokens) are described using regular grammars or regular expressions.

- 📚 Regular Grammars: A formal, restricted type of grammar that generates regular languages, recognizable by finite automata. They are crucial for lexical analysis and pattern matching due to their efficiency.

-

Syntax Analyzer (Parser):

- Can be modeled as a pushdown automaton. This is a more powerful computational model than a finite automaton, capable of handling the recursive nature of programming language syntax.

- The grammar used for syntax analysis is a context-free grammar (CFG), commonly written using BNF.

🚀 Advantages of Separating Lexical and Syntax Analysis

Separating the overall syntax analysis into two distinct phases—lexical and syntax analysis—offers several significant benefits in compiler and interpreter design:

-

Simplicity ✅:

- Less complex approaches can be used for lexical analysis, as it deals with smaller, more localized patterns.

- Separating these concerns simplifies the design and implementation of the parser, allowing it to focus solely on the structural relationships between tokens rather than individual characters.

-

Efficiency 📈:

- The separation allows for the optimization of the lexical analyzer. Since it's a frequently called component, optimizing its performance (e.g., by using efficient finite automata implementations) can significantly speed up the overall analysis process.

-

Portability 🌍:

- Parts of the lexical analyzer might be platform-dependent (e.g., handling character encodings specific to an operating system).

- However, the parser, which operates on abstract tokens defined by the language's grammar, is generally more portable across different systems. This modularity makes it easier to adapt the language processor to new environments.

💡 Summary

In essence:

- Lexical analysis handles the smallest elements of the language, converting raw characters into meaningful tokens.

- Syntax analysis handles the structural relationships between these tokens, ensuring the program adheres to the language's grammar.

Together, these two phases form the crucial front-end of a compiler or interpreter, ensuring that a program is both lexically and syntactically correct before further processing can occur.